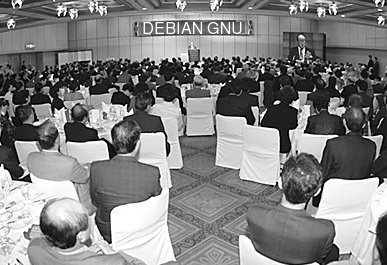

Hands-on Guide to the Debian GNU / Ubuntu / Devuan GNU+Linux Operating Systems

Davor Ocelic

Last update: Oct 2019 — Update some of the information to current state

Copyright (C) 2002-2019 Davor Ocelic, Spinlock Solutions

This documentation is free; you can redistribute it and/or modify it under the terms of the GNU General Public License as published by the Free Software Foundation; either version 2 of the License, or (at your option) any later version.

It is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU General Public License for more details.

You can find a copy of the GNU General Public License in the common-licenses directory on your system. If not, visit the GNU website.

The latest copy of this Guide can be found at http://techpubs.spinlocksolutions.com/dklar/.

This article is part of Spinlock Solutions's practical 4-piece introductory series containing the Debian GNU Guide, MIT Kerberos 5 Guide, OpenLDAP Guide and OpenAFS Guide.

- List of Examples

- 1-1. /etc/apt/sources.list

- 4-1. /etc/network/interfaces

- 4-2. /etc/ppp/peers/provider

- 4-3. /etc/chatscripts/provider

- 4-4. /etc/ppp/pap-secrets

- 4-5. /etc/wvdial.conf

- 4-6. /etc/gpm.conf

Preface

Welcome. The following Guide should help you make the first steps with Debian GNU, a Unix-like operating system.

If you encounter any unexpected problems, have patience and persistence. Unix is a colorful collection of more than 50 years of professional research and development applied to computer hardware and software, and there is a certain learning curve involved.

You need to learn the basics of Unix architectures and operating systems properly before tackling more advanced topics.

GNU/Linux has been progressing with an ever-increasing pace over the years, both technically and in widespread adoption, and it is easier than ever to download, install and start using a Linux-based operating system. Many underlying technologies have been successfully wrapped into graphical control panels, obscuring the technical workings of the system. Our goal here is to show you the technical aspects of the system and give you a level of understanding that goes far beyond graphical screens and management interfaces.

The Linux kernel and almost all other software are free ("free" as in freedom). This has allowed many projects and companies to package the Linux kernel and tens of thousands of applications in easily-installable and functional wholes called "distributions". Our distribution of choice will be Debian GNU/Linux.

Of all Linux distributions, why exactly Debian GNU? The system and community organization in Debian GNU outperform all competition by a wide margin. There are some Linux distributions, Unix operating systems (such as Sun Solaris or QNX) and kernels that do some specific tasks better, but Debian GNU is a general-purpose winner.

(In addition to Debian GNU, there are also two Debian derivatives available — Ubuntu and Devuan GNU+Linux. Ubuntu is a more desktop- or enterprise-oriented distribution, while Devuan GNU+Linux is a fork of Debian GNU without systemd.)

Also, we see that all interesting and exciting new developments are happening in the GNU/Linux arena; just focusing on Linux and the popular technologies (i.e. the LAMP -- Linux, Apache or nginx, MySQL or PostgreSQL, and Ruby on Rails or Groovy Grails or Crystal Amber, and Git) may turn out your cool steady job and source of income.

You should read this Guide after you successfully install some variant of the Debian GNU system to your computer (with or without the help from the Debian installation manual).

The Guide is a balanced mix bewteen the administrator's and the user's guide; it is probably too broad for those who belong to either of the two extreme categories. The approach used should fit home users best — people who do have a Debian installation at hand, and want to learn and experiment.

Our end goal is that you develop the mindset to solve further problems on your own; the basic understanding and general logic matter, not the exact implementation or usage details. In most general terms, we could say we'll try to explain the principles of Unix system design, and how they work in practice using command line and text processing utilities; we will not bother describing point-and-click GUIs and menus; such documentation is available elsewhere (on say, Gnome, XFCE, CDE or KDE websites).

In a Debian guide, we will not hesitate to use Debian-specific features and commands, but note that most of our discussion will, at least generally, apply to other Linux or Unix systems as well. Additionally, by saying this is a beginner's guide, we definitely won't restrict ourselves to system basics; this Guide is hiding many details even experienced users would find useful or amusing.

Please read the following two sections (the Section called Conventions and the Section called Pre-requisites) carefully, as they explain some basic ideas and assumptions followed throughout the Guide.

Conventions

Application names are specified in "application" style, which just happens to be plain text with no special formatting. The executables (system commands that you can run) are given in strong, command mode (such as free, top, or ps) and are, at places where it helps readability, enclosed in single quotes (say, 'rm'). At places where we make explicit references to manual pages (program documentation), we'll use the "name(section)" style, such as less(1). In practice, this means you can write man 1 less to read the manual page about the program called less, or even man 1 man to read more about man itself.

All file and directory names are given in "filename" style and, when the context requires exact location on the system, start with a slash("/") or tilde("~") character (/etc/syslog.conf, ~/.bash_profile, or /etc/init.d/). Although not mandated by system behavior, we consistently include "/" at the end of every directory name to make directories clearly distinguishable from regular files. Also, this notation helps eliminate ambiguity in commands that accept both a filename and a directory name as an argument.

Symbols that need to be replaced with your specific value use the REPLACEABLE style — they're simply given in all uppercase, and their final display depends on the enclosing element they're part of. In addition, they're slightly italicized. For example, in a shell command kill -9 PID, "PID" should be substituted with an appropriate value.

User session examples (consisting of user input and the corresponding system output) use the "screen" mode. User input is visually prefixed with "$" for user, and "#" for administrator commands. Program output is edited for brevity and has no prefix.

Unix, GNU, Debian, and Linux are words that can sometimes, depending on a context, be used interchangeably. Throughout the Guide, we will be consistent and, on each occasion, use the word with the broadest scope. For instance, we would talk about the Unix command line, GNU development tools, the Debian infrastructure, and Linux process management.

When a concrete system username will be needed to illustrate an example, user1 will play the role of an innocent user.

You'll notice that the Guide contains many links to external resources. This can make you unhappy if you find a lot of them interesting and get distracted from this Guide. Therefore:

We will always include the minimum of text, the part that is crucial to understanding the subject, directly in the Guide. External links will only provide more detailed information. As a result, it will be possible to read the Guide without following any external links.

We will group all links appearing in the Guide in a separate appendix at the end of the Guide, along with descriptions. That will allow you to focus on the Guide exclusively, and think about additional resources later.

Pre-requisites

To make sure you can successfully follow this guide, there are few key points we need to agree upon. Let's assume that you:

installed Debian from scratch (scratch = new, clean install). If you did not, and you are reading this on an existing, usable Debian system, have in mind that most of our basic configuration steps were already performed on your system. The easiest way to install is by using a bootable USB stick; obtain the .iso install image and save it directly to your USB stick using a command like dd if=netinst.iso of=/dev/YOUR_USB.

have the network properly configured. This is important if you have a decent Internet link and want to install software directly from the Debian repositories on the Internet or your local LAN. You are given the chance to configure the network and remote software repositories during the installation. These settings then carry over to the installed system

have just the base system installed (around 150 megabytes in total). To get a system like this, don't run dselect or tasksel at installation time. But if you do, and end up with a larger set of installed packages, have in mind that most of the basic packages are already installed on your system

start working with the system by logging in to the superuser account (your login name is root, and the password is whatever you defined at installation time)

Chapter 1. Overview

"On our campus the UNIX system has proved to be not only an effective software tool, but an agent of technical and social change within the University." | ||

| --in memoriam, John Lions (University of New South Wales) | ||

Debian GNU software distribution

Free Software distributions such as Debian GNU are comprised of a large number of Free Software programs. That's why, when you pick Debian GNU, you get not only a basic operating system, but a complete environment with much more than 50,000 precompiled, prepackaged and ready to use software programs. Exactly how this complex job gets done in practice is a subject for itself, but the important thing is it's only possible because all of the included programs are released under one of the free, DFSG-compliant licenses.

For you, the end user, this means all the software you'll ever need is already prepared, tested and waiting for you in the Debian repositories. Debian repositories consist of a standard directory hierarchy, software packages (*.deb files), and the accompanying meta data which describes the repository contents. Repositories themselves are not tied to a particular access method or storage medium, so you can access them from almost anywhere: the Internet, LAN, USB sticks, local hard disks, or CD/DVD Roms.

Advanced Package Tool

Most computer environments recognize the concept of software installation, and so does Debian GNU. Computer programs that you can install are, in a packaged form, archived in Debian repositories. Before use, they need to be retrieved from the repository, installed (unpacked onto the system and registered with the software management tool) and configured.

Debian specifically has developed a very sophisticated tool for this high level package management called APT (the Advanced Package Tool) that leaves most of the competition behind. Combined with an easy and straightforward configuration process, apt is surely one of the winning Debian ideas.

Configuring APT

There's very little you need to do to configure your Debian package management system, but it's a critical step and you need to get it right.

repository locations are defined in the file /etc/apt/sources.list and directory /etc/apt/sources.list.d/

if you have any Debian GNU CD/DVD Roms, run apt-cdrom add for each CD you place in the drive

the complete contents of /etc/apt/sources.list could look like the following (give or take minor variations between Debian/Devuan/Ubuntu):

Example 1-1. /etc/apt/sources.list

deb http://ftp.CC.debian.org/debian stable main deb-src http://ftp.CC.debian.org/debian stable main deb http://security.debian.org/ stable/updates main deb http://ftp.CC.debian.org/debian testing main deb-src http://ftp.CC.debian.org/debian testing main deb http://security.debian.org/ testing/updates main deb http://ftp.CC.debian.org/debian unstable main deb-src http://ftp.CC.debian.org/debian unstable main

run apt-get update to make apt read the config, retrieve package indexes, and prepare local cache. Watch out for any error output.

Having configured apt, you will now be able to install all the additional software you'll need. Just make sure to run apt-get update to start off with the up-to-date package lists (running it twice does no harm).

In recent versions of Debian, you can also use apt instead of apt-get. apt is a single command that supports the most common functions that were previously provided by multiple older/traditional commands such as apt-get, apt-cache, etc.

Chapter 2. First steps around the system

"Now I know someone out there is going to claim, 'Well then, UNIX is intuitive, because you only need to learn 5000 commands, and then everything else follows from that! Har har har!'" | ||

| --Andy Bates in comp.os.linux.misc, on "intuitive interfaces", slightly defending Macs | ||

Base Debian GNU installation

If you have tried any of the other Linux distributions such as Ubuntu, Red Hat, Mandrake, or SuSE, you will notice that unlike them, Debian will allow you to install only the base/minimal system without any graphics (without the X Window System) and without a desktop (although the "d-i" installer will perform all hardware auto-detection for you).

With Debian GNU and the basic installation without an X window system, you will get a small base system with the black console and a Login: prompt on the top left. We'll move from there on. (In case you are already using a system with X Window System installed, you can press Ctrl+Alt+F1 to switch to the 1st text console, and from there you can press Alt+F7 (or Alt+F8 or Alt+F2) to get back to X.

Shell and filesystem

When you log in to the system (authenticate typically with your user name and password at the Login: prompt), you'll be confronted with a text command line, something that might remind you of DOS, but that's where their similarity ends. What you are actually seeing is bash, one of the popular shell programs. In general, shell programs serve as agents between the user and the system (accept commands, return output) and are all, in fact, more or less sophisticated programming languages.

Computer software is based on files. Files live in directories (folders), which are located on virtual or physical, local or network disk partitions. In Unix, every system has a root partition which is mounted (think "associated") as the root directory (denoted by /) and serves as an entry point to the filesystem. For example, /home/user1/.profile (called the pathname, absolute path, or full path) tells us that there is a file named .profile which resides in the directory /home/user1/. In this example, user1/ is obviously a subdirectory of home/, and home/ is a subdirectory of / — the root directory.

| Files beginning with a dot (such as .profile from just above) are called dotfiles. They usually contain configuration settings for various programs and are — as such — considered of secondary interest and usually omitted in directory listings. It's the magic dot (.) at the beginning that makes them "invisible" for the purpose of reducing "noise" in the output. |

Each file in Unix belongs to one user and one user group. Additionally, there are file modes (or file permissions) that control which operations are allowed to file's owner, file's group, and everyone else. The only directories that regular system users can modify are their home directory (usually /home/USERNAME/) and system temporary directories (/tmp/ and /var/tmp/).

| Unix filesystem permissions |

|---|---|

Although in the traditional concept a file or directory belongs to one user and one group, various advancements have become available over time. Most notably, these include the POSIX Access Control Lists or Role-based Access Control solutions. ACLs allow files and directories to have potentially different permissions for every system user or group. RBAC systems allow users to be assigned "roles", and thus granted access to role-related files. However, the traditional concept that doesn't use ACLs or RBAC is still the default and offers quite surprising flexibility, rarely causing one to need to use more sophisticated permissions models. |

Concerning the filesystem "navigation", there's a concept of current directory which tells the active (working) position in the filesystem. You can discover the working directory at any time by typing pwd or echo $PWD in your shell. When you log in to the system and the shell starts up, it drops you to your home directory — that is thus your starting point.

In addition, with Unix, you generally don't need to invent the directory locations for the system software you want to install. When you install software, it is automatically installed to predetermined locations. Some of the directories you need to know about are:

/home/ - users' home directories

/etc/ - system-wide configuration files

/bin/, /usr/bin/, /usr/local/bin/ - directories with executable files (that is, programs you can run). bin comes from binary

/lib/, /usr/lib/, /usr/local/lib/ - shared libraries needed to support the applications

/sbin/, /usr/sbin/, /usr/local/sbin/ - directories with executables that require increased permissions and are generally supposed to be run by the Superuser

/tmp/, /var/tmp/ - temporary directories. Watch out here because /tmp/ is, by default, cleaned on each reboot

/usr/share/doc/, /usr/share/man/ - complete system documentation

/dev/ - system device files. In Unix, almost all hardware devices are represented as files somewhere under /dev/

/proc/ and /sys/- "virtual" directories which don't really exist on disk. They display state directly from the kernel and can be used to get or set Linux kernel settings

Why software is installed to pre-determined locations is easy to explain: Unix and Unix filesystems allow every directory and subdirectory to be mounted to an arbitrary place — local disk partitions, network partitions, or RAM disks, to name a few.

When you need to manage location of files and directories with finer granularity, then Unix symbolic and hard links, GNU Stow, or dpkg-divert come to play (but they're all advanced concepts that we'll yet return to).

| In the above list of directories, you might have noticed a pattern. For example, there's bin/ directory in all of /, /usr/ and /usr/local/. Directories and files found in the root directory (/) are official distribution files and are required for the system to boot up. Directories and files in /usr/ are also official distribution files, but are not essential for the system to boot up. A large majority of software belongs to this group. Note, though, that this is kind of a historic leftover, from times when systems were booted using the "root" tape, and then had another, "user" tape mounted on /usr/ later to add additional "user" software and applications. This division is, for historical reasons, still in use today, even though it has no practical use and it has not been possible to boot a regular Linux system without /usr/ for a number of years now. (Also, some even claim that it was never really in the spirit of GNU/Linux distributions to do so.) Finally, directories in /usr/local/ contain locally installed software (which does not come from the official operating system distribution), hence the separate location and name "local". (In recent times also a directory named /opt/ has been a common location for 3rd party packages to put their files in. The structure inside of it does not follow standard Unix rules, however, and is basically organized just as /opt/PROGNAME-VERSION/ with arbitrary structure beneath it.)) And there's also the share/ directory that you can see in the mentioned directories; it contains files which are hardware platform-independent and are the same on all supported architectures. |

Manipulating software packages

Now, recall apt we mentioned some paragraphs above. As you're left with a minimal system, you need to install few packages to make your Unix life easier. Let's start by typing and executing apt-get install less man-db manpages vim etckeeper debsums ferm sudo in your shell.

To see what exactly is each of the programs used for, you could run apt-cache show PACKAGE NAMES. If the output scrolls out of your screen too fast, help yourself with the Shift+PageUP/DOWN keys. If the retrieveable area is still too small, run the command with a buffer: apt-cache show PACKAGE NAMES | less (to exit the "pager" program less, you press q). Anyhow, it will soon become pretty obvious what the above programs are used for, but remember the tips we just mentioned (apt-cache, Shift+PageUP/DOWN, less).

For a list of all installed packages, run dpkg -l. To see a list of files installed by specific packages, run dpkg -L PACKAGE NAMES, such as dpkg -L debsums. To find out which package does a file belong to, run dpkg -S FULLPATH, such as dpkg -S /bin/bash.

To remove a package, run apt-get --purge remove PACKAGE NAMES. The --purge switch deletes the eventual program config files (which are normally left on the system) as well as the program itself.

Nowadays, there is also the base command apt which supports installing packages with apt install. In its default configuration it uses a little bit more interactive output than apt-get.

Sometimes it's not that easy to guess package names, so you'll need to search for them (based on key words). To do so, use apt-cache search KEY WORDS, such as apt-cache search console ogg player.

Let's now make use of those programs we installed a moment ago.

Administrative account wrapper

Run command id to determine your user name. You should see the UID 0 (unique User ID zero) and the username root. Unix traditionally maintains the concept of a superuser or root, a special account with UID 0, which is free to perform all administration tasks. This is a little different in practice with Linux because Linux uses a lot more flexible capabilities mechanism, but the principle stays the same. Logging in as root is strongly discouraged, so we will now see how to avoid it. You will always use a regular system account, and only execute specific privileged commands by using sudo that will, by default, run administrative commands as root.

Create a non-privileged account now by running the adduser user1 command. Add it to the sudo group by running adduser user1 sudo. The sudo config file, /etc/sudoers, is configured to grant admin privileges to this group's members.

Alternatively, you can run echo "user1 ALL=(ALL) NOPASSWD: ALL" >> /etc/sudoers to grant admin privileges to specific user "user1". The same effect of this oneliner can also, be achieved by opening the file in a text editor, adding the line, and saving it.

As a performance optimization, the Linux kernel does not re-read the list of user's groups until that user completely logs out of the system and logs in anew. If running the command id does not show your new group ("sudo"), you could log out of all terminals and then log back in as just advised above. The logout is is done by exiting all shells, which means typing logout, exit, or pressing Ctrl+d in all active terminals. Alternatively, you can run newgrp sudo; newgrp YOUR_GROUP. This will force the kernel to give you a shell with the group 'sudo' listed in your additional groups.

To see the sudo system at work, run id; it should tell user1 is your active user ID. Then run sudo id, and see how the id program is ran with root privileges. You will use sudo and you will not log-in as the superuser any more.

We will use sudo extensively; to edit system configuration files for example, you will use sudo nano /etc/FILENAME.

Unix text editors

Most of Unix system configuration is kept in plain text files, so you will definitely want to pick your favourite text editor out of a little myriad available ones. You could start with joe, nano or pico (nano is probably the best, as it is included in the base Debian GNU system). These editors are simple and their common keystrokes are listed at the bottom of the screen. Try running nano now to see its simplicity for yourself. (Keep in mind that the "^" character, needed to access nano's menus, represents the Control key on your keyboard.)

The category of professional text editors, however, is reserved for the two old rivals - Richard Stallman's GNU Emacs and Bram Moolenaar's Visual IMproved (or the old traditional vi). Apart from having ultra fast keystrokes, macros, abbreviations, editing modes, syntax highlighting and keyword completion, Vim can literally solve it's way out of a maze (take a look at /usr/share/doc/vim/macros/maze/ once). Another vi's advantage is it's installed on about every Unix system you can think of. References to the relevant Vim tutorial pages are given at the end of the Guide.

Since 2014 there has also been neovim in existence, which is a fork of Vim with additions, that strives to improve the extensibility and maintainability of Vim. It also supports different frontends, which were traditionally impossible or overly complex to do with Vim.

If you have no idea which editor to use, try using nano. Then, once later, you might get around to installing Vim and running vimtutor to learn its basics. But don't take this as a joke; Unix is about text (lots of text, even if you'll probably prefer GUIs at first), and learning Vim or GNU Emacs is an absolute necessity.

So invoke sudo update-alternatives --config editor now, and select your favourite text editor of the moment. (Once selected, this editor will be invoked when the generic editor command is run).

From now on, we'll assume you know how to open a file, change it and, save the changes back to disk (sudo nano /etc/FILENAME).

Basic system commands

You can't start wandering around the Unix system without being introduced to the basic available commands first. Now that you're using an unprivileged account and can't ruin your Debian GNU installation any more, we'll take a tour of system file and directory manipulation commands.

(This section has turned into an action-packed bunch; let's get into the right mindset and let's roll!)

To change directory, use the command cd. Run few variants of it and verify current directory with pwd or echo $PWD. Try and see where each of the commands cd, cd /etc, cd -, cd .., cd $OLDPWD, cd ../.., and cd ~ position you.

The special tilde character (~) in file names expands to the home directory of the user invoking the command. For user user1, the commands cd, cd ~, cd $HOME, and cd /home/user1 would do the same thing. In addition, if user1 wanted to reach user2's home directory, he would only have to type cd ~user2. (This ability to reach user's home directory using a syntax independent of actual system setup is another winning concept in Unix).

The dash(-) and the $OLDPWD environment variable reposition you to the previous working directory. Running the command multiple times would cycle between two most recently used paths.

You can check out the contents of a directory with the ls (list) command; try ls, ls -al /etc, or ls -al / (-a is needed to make ls display the dotfiles — those that start with a dot and are considered "hidden", remember? -l displays the output in list format).

Create new files in your home directory using a text editor — use cd to reposition to your home directory and then run say, nano test. Alternatively, you could use a variant of the command which is not dependent of the current working directory — nano ~/test for example. Try removing (deleting) the created files with rm. Create directories with mkdir, delete them with rm -r or rmdir.

Briefly note that the previous examples used your keyboard as the input and your monitor as the output device. It's important to understand that Unix can redirect such data streams to arbitrary locations (files, pipes, printers, network sockets and more). For example, run ls -al / | grep bin. The output of the ls -al / command will be piped or redirected to the next command, grep, which will filter the input and only display lines which contain the string "bin". This piping (a special case of redirection where output is redirected to a program) is denoted by the pipe ("|") character.

If you'd like to redirect the output of a command to a file (instead of to the next command, as the above pipe would do), use the > or >> redirects; the difference is the double >> appends content to a file without truncating (erasing) it first. Try say, ls -al / > /tmp/dirlisting. Note that some interesting variants are possible with this that are equal in effect, such as putting the redirection at the begnning of the line, i.e. > /tmp/dirlisting ls -al.

Important thing to say is that each process started usually starts with three data streams open; they're called the standard input, standard output and standard error, identified by system "file descriptor" numbers 1, 2 and 3 respectively. This is where the ">" redirects and "|" pipes come to play — they influence those descriptors. For example, ls -al / > /tmp/dirlisting (or "1>" instead of just ">") redirects standard output from the screen into a named file; ls -al 2> /tmp/errors redirects any error output to a different file; ls -al / | grep bin redirects standard input into "grep" from a keyboard to the output of the previous command. In addition, you can of course mix these, such as ls -al / | grep bin > /tmp/stdout 2> /tmp/stderr, and, depending on the shell you use, you can use ">&" or "&>" to redirect all output (stdout and stderr) at once. In a typical, non-redirected session, the location for all three channels is /dev/tty, a "magical" file that always corresponds to your current console or terminal.

Sometimes you want the output to appear on both the screen and saved in a file. This is Unix and it's easily done: ps aux | tee /tmp/ps-aux.listing. We can mention that, in a same way, the output can be "duplicated" to another console (yours or, subject to access permissions, one belonging to a different user). For those who wonder how to get the output on a printer, let's just say ps aux | lp is enough once the printer is configured.

Some other times, you need to create an empty file. For example, try touch test2, > test2 or >> test2. The difference is that, if the file already existed, touch would just update it's modification time, while the single redirection symbol would truncate it as well. Some people also use echo > test2 but they don't figure their file isn't exactly empty — it is of size 1 byte and contains 1 ASCII character which represents "newline" (this happens due the echo command appending a newline to the end, unless -n option is given to it).

You now know four command line methods to create a file; try testing your friends' knowledge.

To save a copy of your interactive (user input-output) session in a file named typescript, just run script. To finish the typescript, just exit the shell (by say, pressing Ctrl+d on an empty line. As we've said earlier, Ctrl+d is a standard combination for "End of Input").

When you type say, ls, an instance of the /bin/ls program becomes a process (a running program). We'll cover processes thoroughly in a separate chapter but, for the moment, let's just say the program you run is assigned a unique process ID (the PID), it starts running on the processor (the CPU) and competes for the processor time with other running programs. To see your current processes, run ps. To see a complete list of processes on the system, run ps aux. To see process list including threads, run ps auxH. When the job is complete, the corresponding process terminates, and the PID number is reclaimed to the pool. For an interactive process monitor, see top. Within top, you can press "1" to see all processor cores, "M" to sort processes based on memory usage, or "P" to sort based on processor usage. For a more colorful version of top, try apt install htop.

Check system memory information with free. If you get into total/free memory mathematics, just keep in mind a lot of used memory is not a bad thing and it doesn't mean your system is wasting it; we'll discuss this later in the Guide.

Additional and interesting programs related to system and memory status that you could install are sysstat and memstat.

To see a list of mounted filesystems, their mount points and mount options, simply run mount. To see disk usage statistics, run df.

| Note that mount only reads a file, /etc/mtab, and "prettifies" it a little before display. In turn, /etc/mtab should contain content that is essentially the same as /proc/mounts, but this is not always the case (for example, if /etc/ was mounted read-only at time when mount wanted to update the file). Unless you're really adventureous, you won't happen to see output from the mount command being incorrect, but remember that only /proc/mounts tells the definite truth. |

Now, let's say you wanted to execute commands uptime, free and df at once. You could do this simply by running uptime; echo; free; echo; df. (It is the semicolon ";" important here which allows us to run multiple commands at once. The command echo only serves to produce additional empty lines in between).

Use w or who to see the list of current system users. Run last -20 to see last 20 logins to the system. If you'd like an interactive variant of w (in the top fashion), use a general purpose watch command: watch -n 1 w (finish by pressing Ctrl+c, the generic "break" signal). watch is pretty interesting, by using quotes, you could run watch -n 1 'uptime; echo; free; echo; df' as well.

Run uname -a to see the most general system information - the hardware and kernel types and versions. For the run time statistics, run uptime; the program reports the current time, time since the machine boot (the machine uptime), the number of users logged in and the load (processor usage) averages for the last 1, 5 and 15 minutes.

To bring the idea to yet another level, let's say you had a complex chain of commands saved in a file, and wanted to execute the whole file at once (in "batch" mode, as people would say). This is, of course, easily doable with the piping principle mentioned above. Let's first run echo -e "uptime; echo\nfree; echo\ndf" > commands to create our "batch" file. (Additionally, run cat commands to see how the file really looks like and to understand the effect of the newline character \n). To "batch-execute" it, all we would have to do is run cat commands | sh, or sh < commands, or just sh commands. What's more, if you set an executable bit on the batch file (chmod +x commands) and added an appropriate "shebang" line to the file (by opening it and inserting #!/bin/bash at the top), you could run it by invoking ./commands or, more generally, /path/to/commands.

Let's cover some more stuff. One of them is the shell history. The commands you type, provided that they do not start with a space, are saved in shell history. The history can be viewed with history, navigated with keys Arrow-Up/Arrow-down and searched with Ctrl+r and typing in some part of the searched command. Also, the output of history numbers the previously executed commands. You can re-execute them by simply typing "!NUMBER", such as !1, !14, !-2, !ls or !!. The first two execute the command corresponding to the number; !-2 executes the command before last, !ls executes last command that started with "ls" and "!!" is a synonym for !-1 (re-execution of last command). Results of the "!" expansion can be combined with other input, so a command like ls; !! -al would in fact execute ls; ls -al .

Pressing Alt+. or Esc+_ would insert the last argument from the previous line into the current line. For example, if you ran mkdir DIR_NAME, then pressing cd Esc+_ would expand to cd DIR_NAME. Additionally, to insert arbitrary word from the previous line into the current line, you would use !:NUM, where NUM starts from 0.

There's another cool trick allowing you to correct previous line (that is, replace "- " with " -"). For example, if you typed ls- al and got a "bash: ls-: command not found", one obvious thing to correct it would be to press Arrow-Up and replace "- " with " -". The other solution though is correcting the previous line using carrets: Try running the shown invalid command (ls- al) and immediately after that run ^- ^ -^. The carrets ("^") will make the specified correction in the previous line and execute it.

The shell history of executed commands is saved to a history file (usually ~/.bash_history) when the shell program cleanly exits, and is thus preserved between sessions. To prevent history from being written, you can terminate the shell forcibly with kill -9 0 or kill -9 $$ (the "0" or "$$" are synonyms for the current process, and kill -9 will forcibly terminate it).

Shells also support a nice model called "aliases". You can alias any text to a shorter form, and you can then access the alias, the unaliased command (in case of the same name) and append extra arguments. Here's how: you define an alias with a syntax alias NAME='COMMAND ARGS....', such as alias today='find -maxdepth 1 -mtime -1', which would find all files in the current directory that have been modified within one day. To invoke this, you could simply call the new aliased command, today. You can also alias existing functions. for example, to alias the remove command ("rm") to always prompt for confirmation before delete, you would run alias rm='rm -i'. They you could invoke rm FILE..., and it'd automatically mean rm -i FILE.... In case you want to use the original "rm" while the alias is defined, prepend the command with a backslash, \rm FILE.... Read more about Bash aliases with help alias.

You can see the list of defined aliases by simply typing alias, and you can check exactly what gets executed when you run a command with type COMMAND.... Aliases do not persist accross sessions, so you have to define them in the shell startup files, such as ~/.bash_profile for the Bash shell. Note that aliases are not the only way of packing multiple or long commands in an easily-accessible shorter form — you can do the same (and more) with shell script files, in which you type commands exactly as if you were executing them on the command line. Your ~/bin/ directory is the right place for this, as the programs copied there will be automatically searched by the shell when you log in (see ~/.bash_history for how it happens).

Shells also support the function called "backticks", invoked with the `` quotes. Text within backticks is executed as a separate command first, and its output is inserted in the original command, which is then executed. For example, to display current date, you can simply run date. However, to demonstrate the point, you can also write echo `date`. In that case, date would be run first, and its output would not be printed to the screen but inserted in the parent command as plain text, such as echo Tue Feb 2 11:19:50 CET 2010. The echo command would then print it to the screen. In this simple example, the final effect is the same, but the principle is not.

Another thing to mention is searching for files and executing commands on them. Let's say you wanted to search for all MP3 files in your home directory. You'd do this with cd; find . -name '*.mp3'. The first argument to "find" is the starting directory. In case it's the current directory, you can use "." or omit the argument altogether. To perform a case insensitive search, use -iname instead of -name. Another thing you want to do on the files found is run some command on them. One (lame) way to move all MP3 files to a separate directory would be mv `find -name '*.mp3'` /some/directory. However, most MP3 files have spaces and various non-standard characters in their names, so this command would crash and burn. Besides just not working (because it would treat "abc def" as two files, "abc" and "def"), a specially crafted filename could cause wreak and havoc. For example, part of the MP3 filename " -f " would actually be understood as the --force argument to "mv". So the first line of defense is to use "--" after "mv", which instructs the command that everything that follows is just the list of filenames, not command options. But the problem of spaces and special characters in the filename would still bite us, because the shell has a variable called "IFS" which treats spaces and newlines as separators. So to eliminate the whole issue, the proper and superior way to do this, one that is not vulnerable to file names or anything else is as follows: find -iname '*mp3' -print0 | xargs -0 mv -t /some/directory. The "-print0" option to "find" will use "\0" (the null character) as record separator; option "-0" to xargs will make xargs understand that, and xargs will then run the specified command with found filenames as arguments. Xargs would append all filenames at the end of the specified command, even honoring maximum line lengths (so you avoid the "Argument list too long" errors). If the command you're invoking does not support multiple filenames, or you want to run it on a file by file basis, pass "-n 1" option to xargs. Finally, if the filename is supposed to be inserted in the middle of the xargs command line (and not automatically at the end), use "{}" to mark the point of insertion.

OK, fine. Unix programs come in many variants, and with many options (as you have been demonstrated in this section ;-). They usually have a large set of supported options and successfully interact with each other, effectively multiplying their feature lists. I've read somewhere an interesting thought — that a Unix program can be considered successful if it becomes used in situations never predicted by its author. All of the programs I mentioned above are crucial to Unix and are successful. Their full potential greatly exceeds basic usage examples we provided, and it is implicitly assumed you should look up their documentation (man PROG_NAME) for all more detailed information, which is also the topic of our next chapter.

System documentation and reference

Debian GNU is a free and open system. All the issues that might pose a problem for you are already documented and explained somewhere. Surely a milestone in your Unix experience is learning where those information sources are, how to interpret their contents, and generally, how to help yourself in predicted and unpredicted situations without someone handholding you.

Unix documentation is extensive and easily available. Most of it is written by the software authors themselves (and not by the nearest marketing department) so you actually have the privilege of communicating to the authors' themselves. The pool of people who write Free Software programs and documentation includes a large community of technology professionals.

The process starts with yourself reading the provided documentation to see what do the software authors have to say to you, and to pick a little of their mindset. Debian pays special attention to the documentation; if it's not available from the upstream (original) author, then the Debian developers write the missing pieces themselves.

In Debian, each package you install creates a /usr/share/doc/PACKAGE/ directory and places at least the changelog files there. The directory in addition often contains the INSTALL, README and other upstream files which are sometimes irrelevant for you, as the tasks described there have already been performed by the Debian developers (but the rest of the notes, of course, still provide good information about the program). What you definitely are looking for are Debian-specific notes (in README.Debian files). This is the first place you visit for more information about a package. It is not uncommon that Debian packages see an enormous "added value" from maintainer-provided README files and practical recipes. Sometimes, if the documentation is big, a standard naming convention is followed and the documentation is distributed in a separate PACKAGE-doc package.

The other, very often used, part of the documentation are the system manual pages, accessible with the man and info commands. Man follows the traditional Unix manpage approach while info is the GNU-style texinfo collection. Man pages are sorted by volumes or sections which include user commands, system calls, subroutines, devices, file formats, games, misc and system administration topics. The man and info systems don't read each other's manual pages, but coexist peacefully on your system, mostly in a way that the info pages are ignored by your part. One of the reasons for this, in my opinion, is the very annoying info user interface (unless you're accustomed to GNU Emacs — then you'd describe the feeling as normal). To remedy the problem a little, try installing the alternative pinfo browser (sudo aptitude install pinfo).

To get a feeling of manpages, try running man mkdir. You'll notice all manpages follow a pretty standard structure; they often include NAME, SYNOPSIS (Usage), DESCRIPTION, AUTHOR, BUGS, COPYRIGHT and SEE ALSO sections. The specific mkdir page you opened tells you that all program options (those starting with "-" or "--") are optional (denoted by angle brackets — [] — in the synopsis line), but at least one directory name to create is mandatory (required). In some manpages, mandatory options are enclosed in <less/greater-than> symbols (like <-s size>).

See the man ps or pinfo ls commands. It is absolutely necessary to develop the habit of reading manpages. Whenever we mention a system command or a config file, we implicitly expect you to skim through its manpage. Novices have trouble understanding the manual pages; even though all the information they want to learn is right there, in the manpage, they have a hard time connecting heads to tails. If you happen to have this problem, keep reading — and given enough material you'll naturally come to understanding!

Sometimes you only want a general and short, one-line description of a program. See whatis cp or whatis df du. If you're looking for a particular functionality but don't know the actual command name, try using apropos. As it searches for manpages that satisfy any of the key words you enter, it tends to return large sets of results, so restrict your searches to a single keyword, like apropos usage or apropos rename (or ideally, run man apropos and learn how to specify search mode).

If you feel you need to ask the community a question, you can either use the various mailing lists (MLs) or the "real time" Internet Relay Chat (IRC). Probably more than a few mailing lists exist for any program or project you may have a question about, but the mailing lists are not suitable for informal discussion and amateur help requests (to be correct, no one says they're not — but in a few years time, you surely won't appreciate Google or AltaVista first linking your name to such content).

IRC is your best bet to go over "runtime" issues (although on some IRC channels, there are now bots that collect logs and publish them online, and thus having the same problem as mailing lists). Install the ircii (text-mode) or xchat or hexchat (graphical) package, run it, type server irc.gnu.org to connect and join the Debian channel by typing /join #debian once you're connected to the server. There are always hundreds of people present on the channel; if your questions are meaningful and don't require people to answer in essays, they'll probably be helpful to you. Spend time on the channel, learn from other people's questions and answers, and don't add to the channel noise. Exit ircii by typing /quit. Mind you, the sole possibility of presenting your question before the technically proficient audience is a great privilege and, of course, you must follow some minimum of the protocol: it's not required that you are familiar with the subject (if that was the case, you wouldn't be asking a question in the first place), but do read Eric Raymond's How to Ask Questions the Smart Way to increase your chances of recieving useful answers.

Chapter 3. Basic system administration tasks

"The learning and knowledge that we have, is, at the most, but little compared with that of which we are ignorant." | ||

| --Plato, 427-347 BC | ||

Booting the machine; runlevels and system services

The process of booting a machine starts with the computer loading system BIOS (or PROM, on Unix architectures) code from a known and fixed address in memory. Once that is done, BIOS tries to run user-specified code which is usually a bootloader (the thing that lets you choose the Operating System you want to boot).

In most common scenarios, you have Grub (Ground Unified Boot Loader) or LILO (Linux LOader) installed as the bootloader. Both Grub and LILO accept parameters on the command line, but in Debian the bootloaders are configured not to show the boot prompt. To make it appear, hold the Alt, Ctrl or Shift (depending on the bootloader!) key at the 'LILO' or 'GRUB' message (during boot, just before it continues with the 'Loading linux ....'), and you'll be able to pass arbitrary parameters to the kernel. For Grub, this is achieved by pressing "e" to edit entry, then "e" to edit the "kernel" line, and "b" to boot.

You can play and pass anything to the kernel via this command line; it won't cause harm unless you happen to choose a name that some part of the system actuall uses, such as acpi, mem, root, hda or panic. Your value will be visible in file /proc/cmdline later when the system boots.

After the kernel is loaded, it will start the init program which is the first process started on almost all Unix systems (as such, it has a PID of 1), and is active as long as the system is running.

| As soon as the kernel initializes the keyboard driver, you will be able to pause terminal output by pressing Ctrl+s. This will allow you to stop boot messages scrolling and peacefully examine the output on the screen. Ctrl+q will have the effect of "releasing" the terminal. Keep in mind that you can always use this trick in a terminal (it's not related to the boot-up phase), and the Scroll Lock key on your keyboard has the same effect as Ctrl+s/Ctrl+q. |

And then, in relation to init, we come to system runlevels.

| Please note that this section describes the SysV init, and not systemd. As such, it is accurate for Devuan GNU+Linux and old Debian and Ubuntu releases, but not for newer Debian and Ubuntu versions which use systemd. |

Debian GNU uses so called SysV (System V, read as "system five") init system by default. It means that runlevels are represented as directories (/etc/rc?.d/), and directories consist of symbolic links to files in the "main" /etc/init.d/ directory; here's an example:

$ ls -la /etc/rc2.d/ | cut -b 57- ... S20net-acct -> ../init.d/net-acct S20openldapd -> ../init.d/openldapd S20postgresql -> ../init.d/postgresql ... |

The 'S' prefix starts a service, while 'K' stops it (for the given runlevel). The numbers determine the order in which the scripts are run (0 being the first).

init then excutes local scripts from /etc/rc.boot/ and performs the rest of init tasks specified in /etc/inittab (in older versions, /etc/bootmisc.sh was also ran). At that point, the system is booted, you see the Login: prompt, and life is great even more than usual.

Debian GNU provides a convenient tool to manage runlevels (to control when services are started and shut down); it's called update-rc.d and there are two commonly used invocation methods:

# update-rc.d -f cron remove # update-rc.d cron defaults |

The first line shows how to remove the cron service from startup; the second sets it back. Cron is very interesting, it's a scheduler that can automatically run your tasks at arbitrary times (even when the machine is completely unattended, of course). It definitely deserves some paragraph in this Guide and indeed, we'll get back to it later.

So, all files in /etc/init.d/ share a common invocation syntax (which is defined by Debian GNU Policy) and can, of course, be run manually - you don't have to wait for init to call them. All system services have their init scripts in the /etc/init.d/ directory (which are usually named after the services themselves), and which accepts generic arguments. Let's see an example:

# ls -al /etc/init.d/s* | cut -b 55-

/etc/init.d/sendsigs

/etc/init.d/setserial

/etc/init.d/single

/etc/init.d/skeleton

/etc/init.d/sudo

/etc/init.d/sysklogd

# /etc/init.d/sysklogd stop

Stopping system log daemon: syslogd.

# /etc/init.d/sysklogd start

Starting system log daemon: syslogd.

# /etc/init.d/sysklogd invalid

Usage: /etc/init.d/sysklogd {start|stop|reload|restart|force-reload|reload-or-restart} |

| Please Note: |

|---|---|

|

Now that we've covered the basics of a system boot process, we can move on to a subject that just logically follows.

Virtual consoles

Almost all Free Software distributions ship with predefined 'virtual terminals' - completely separate text screens or consoles which are available with left Alt + F1-F6 keystrokes (only about 6 consoles are enabled by default). Keep in mind that it is also possible to use command-line method to switch between the consoles (see the chvt command) and that you can open new consoles automatically (from scripts or otherwise) with the open command. Some proprietary Unices use the Ctrl + Alt + 1-6 combination (standard numeric keys instead of Function keys).

To enable more virtual consoles than what you get by default, run sudo editor /etc/inittab and add more lines like those:

5:23:respawn:/sbin/getty 38400 tty5 6:23:respawn:/sbin/getty 38400 tty6 |

[You can see which fields have to be incremented]. For changes in that file to take effect, exit the text editor and type telinit q.

If you create more than 12 consoles, you won't be able to access them with left Alt (since the last F key you have is 12), so use Right Alt key to reach consoles 13 - 24. You can also use Alt + left_arrow or Alt + right_arrow to cycle through open consoles. Alt + Print_Screen key switches between two last used virtual consoles.

We did not cover any of the X Window System (Unix graphical interface) stuff yet, but just remember that you'll need to use Ctrl+Alt instead of just Alt to switch from an X window to the console.

The deallocvt command frees memory still associated with virtual terminals which are no longer in use [by applications, not you of course], although this is probably not so important nowadays due to insane amounts of RAM in personal computers.

Some more useful stuff that you can do with the consoles include changing the VGA font size. This can be quickly achieved by running something like consolechars -f lat1-08, where the available fonts are in the /usr/share/consolefonts/ directory.

The other way is to pass "vga=ask" at the GRUB boot prompt (or using lilo -R 'linux vga=ask' for LILO before reboot), upon which the system will give a menu during boot and you'll be able to select the font size (size of 6 is small and fine). This line above would set up LILO parameters only for just the next boot (linux vga=ask). So when you find a nice VGA mode, you should edit /etc/lilo.conf and make it permanent there:

image=/vmlinuz label=Linux read-only # Just add the line below append="vga=X" |

[X is replaced with the actual value you like, 6 for example]. Then, run lilo to apply changes (not forgetting sudo, of course).

In case of GRUB, you would do this by editing the commented "kopt=" line in /boot/grub/menu.lst and running update-grub.

It's also possible to drive the console in the high-resolution VESA mode, but that's quite tricky to set up and doesn't justify for inclusion in our Guide.

Furthermore, if you see the penguin in the upper left corner of your screen while the system is booting, or the console text pointer is a blinking rectangle (instead of just a blinking underscore), then you are using a "framebuffer" graphics mode. In that case, there are more screen modes available to you, but they are just not listed in the boot-time selection menu; see the table on the Framebuffer HOWTO page for the full list. There's also fbset command available (from the fbset package) to control the framebuffer behavior.

| In most simple terms, framebuffers directly map a portion of RAM memory onto the graphics display device. The amount of memory occupied equals horizontal resolution * vertical resolution * bits per pixel. All you need to do in order to draw to the screen then, is modify appropriate locations in RAM. Even though this framebuffer idea is very nice (and framebuffers play much more important role on other architectures than they play on PCs), and even though framebuffers can be really fast, they never gained much popularity on GNU/Linux PCs. They are generally too hard to tune properly, and the Linux framebuffer documentation is terribly poor (the best startup point is the Programming Linux Games book by John R. Hall, Loki software). However, since all VESA2 cards support framebuffers, during one period (from year 1998 to 2001, rougly) framebuffers were often used to run X graphical interfaces on graphics cards for which no free software drivers existed yet; they were slow and all (of course, because they didn't employ any acceleration techniques), but still allowed to run displays with normal resolution and color depths (opposite to the also-supported VGA mode, but which only allowed a 640x480 resolution in 16 colors). Nowadays, framebuffers are mostly used in Operating System installation programs that hope to achieve greater compatibility on a wide range of hardware. |

Besides the mentioned font size, you can also change font types. Install the fonter package and you will be able to edit and create your own fonts by running fonter, or use some of the standard ones you get:

$ consolechars -f /usr/share/fonter/crakrjak.fnt $ consolechars -f /usr/share/fonter/elite.fnt $ consolechars -f iso01.f16 |

Also nice to know is that you can easily change the mapping of keyboard keys. To see current keyboard mappings, simply run dumpkeys > keymap; editor keymap.

After you tune the keymap file to your needs, load it back with the loadkeys ./keymap command.

To see just how great the console is, run the loadkeys program, and type the following in its prompt:

string F1 = "Hello, World!" [Ctrl+d] |

Then just press the F1 key to see the consequences.

Note that this is not the best you can do with the console, it's just a small collection of quick and useful tricks to show you the direction to look into. If you're interested in more console tricks, take a look at (some of) setterm(1), stty(1), tput(1), tset(1) and possibly tic(1).

System messages and log files

Unix systems have a standard message logging interface, and all programs can freely use it. Besides having the advantage of being unified and easily parseable by the log monitoring programs, syslog messages offer a very convenient way to manually monitor overall system status and learn a lot about the system in general.

There are many actual implementations available but they're all commonly known as syslog daemons (daemon = server). In essence, each message contains the facility (category) and priority (importance) information (along with the message text itself, of course). The facilities (message categories) recognized are auth, authpriv, cron, daemon, ftp, kern, lpr, mail, mark, news, syslog, user, uucp or local0 - local7, and the priorities are debug, info, notice, warning, err, crit, alert or emerg.

Messages can be generated by all the kernel, computer programs or system users. When the message reaches the syslog daemon, it is:

prepended with date, time and source information,

matched against the syslog config file rules, and

distributed accordingly. Common actions include writing messages to log files or named pipes, echoing to all (or selected) users' consoles, or forwarding to another computer.

The default Debian syslog daemon used to be a variant of the traditional BSD (Berkeley Software Distribution) called sysklogd. Nowadays Debian uses rsyslog.

| On a BSD Note | ||||||

|---|---|---|---|---|---|---|---|

Note, however, that this is not technically correct; morons.org say LSD is not a Berkeley product (it's from Sandoz), and J.S.Anderson is an anonymous, but the quote is still widely cited and worth mentioning. | |||||||

So, following the consistent naming scheme, the sysklogd config file resides in /etc/rsyslog.conf. If you open it, you'll recognize a simple structure: selectors (facility.severity pairs) associated with actions (output destinations). For the rest of the config file details, see the rsyslog.conf(5) man page.

For both an educational example and a practical result, we're going to make two simple changes to the syslog configuration file:

move the ppp (Point-to-Point Protocol) messages to a separate config file, /var/log/ppp.log, and

make all messages also appear on one of our text consoles (for easy log monitoring).

To accomplish our first goal, simply run echo "local2.* TAB /var/log/ppp.log" >> /etc/syslog.conf (where you replace "TAB" with the actual Tab character by pressing Ctrl+v, Tab). As the pppd logs to facility local2, it'll redirect all messages (regardless of the severity) to a separate file.

For the second part, run echo "*.* TAB /dev/tty12" >> /etc/syslog.conf. That rule will output a copy of every message to your 12th console — /dev/tty12. That console should be empty and unused by other software, but note that technically you can have both a valid login console and syslog messages on the same terminal; the output would just clutter up eventually. To clear it up, you could use Ctrl+l, the standard "clear screen" key combination.

Now, since changes to the config files are generally never automatically detected by the programs that use them, we need to tell the syslog daemon to reload its configuration. We will use the standard /etc/init.d/ interface, which we've talked about already. Simply run sudo invoke-rc.d rsyslog reload or sudo invoke-rc.d rsyslog restart, for the changes to take effect. (Using invoke-rc.d is even better than using things like /etc/init.d/rsyslog reload directly as it does not depend on a particular init scheme).

Even by observing the logs of a seemingly idle (inactive) system, you'll see there actually are periodic jobs ran by the cron daemon (system scheduler). Besides that, try running any privileged command (something as simple as sudo ls) and switch to your 12th console to see how it gets logged.

If you want to send your own messages to syslog, use the logger program (part of the bsdutils package). Try running logger -i -p user.info -- This is a test message.

If you are planing to use the X graphical interface, switching to the 12th console might not be the most convenient way to monitor system messages; your monitor or an LCD display needs to adjust to new pixel frequency every time you switch console; it takes a second or two to do that and it starts getting annoying after the initial amusement. It is possible to solve that by making the frequencies match, but that's out of the scope of this Guide. Our solution to the problem will consist of running X applications such as root-tail instead, which monitor log files and print messages to your root window (the X background).

To round up the section, we could just mention that all the log files are usually kept under the /var/log/ directory, and all of the messages you watched appear on your 12th console were also saved in one (or even more) of those files. You could figure out the purpose of each log file by seeing /etc/syslog.conf.

Particularly interesting is the /var/log/dmesg file - it keeps a copy of the messages that scrolled by at system boot time. You can also use the dmesg command, but instead of the bootup messages, it will display the last few kilobytes of kernel messages (which might or might not be the same as /var/log/dmesg contents, depending on the activity the system saw in the meantime).

Actually, newer systems also sport the bootlog daemon that takes proper care of saving bootup messages. If bootlog is enabled in the /etc/default/bootlogd file, complete boot log will be saved to /var/log/boot.

Deeper look at the Debian package tools

Earlier in the Guide, we mentioned some of the truly basic package management commands along with their most used options. However, Debian offers much wider range of packaging-related tools.

dpkg, the medium-level package manager for Debian, offers some more low-level functions than apt-get, and roughly corresponds to the rpm command on RPM-based (Red Hat Package Manager) Linux distributions.

Most notably, dpkg does not have any automatic package retrieval methods. To install a package with dpkg (say, package vim), you would first have to download the .deb package yourself and then run something like dpkg -i vim_6.0.093-1.deb. dpkg doesn't even check for dependencies, so in this example, package vim could be unpacked but its configuration would be delayed until you first install all the packages it depends on. dpkg is, however, still indispensable for lower-level management and definitely worth the tour.

dpkg -r vim would remove the package if there are no installed programs that depend on it. Configuration files for the package (those listed as conffiles in the package control files) are left on the system. dpkg --purge vim would remove vim along with configuration. dpkg --configure --pending would configure all pending packages (for example, those that were left waiting for processing after an unsuccessful dpkg run).

Sometimes it's useful to copy the package list from one machine to the other, and get all the same software installed on the other system (or simply keep a list somewhere for future reference). Use dpkg --get-selections > list to retrieve the list, and dpkg --set-selections list; apt-get dselect-upgrade later to load the list and trigger installation.

It's also possible to put packages on hold, meaning you don't want the system to touch them. Use echo vim hold | dpkg --set-selections. To make it available for upgrading again, run echo vim install | dpkg --set-selections.

Sometimes a dpkg action can't be performed because of missing dependencies, duplicate files or something like that. Use the --force-all with dpkg to ignore the problem and continue. This option can be used everywhere with dpkg but it often leads to package database corruption (specifically, version mismatches) and total dependency chaos. If you later plan to use apt-get, never use this option as it instantly breaks apt (you can, however, try apt-get -f install and apt will do its best to clean up the mess).

dpkg-reconfigure can be used to reconfigure debconf-enabled packages (those which use debconf to ask questions and get answers about the local configuration). Use dpkg-reconfigure vim to reconfigure vim. dpkg-reconfigure debconf would reconfigure debconf itself. You can choose between a few types of interactive or non-interactive package configuration modes. Non-interactive mode is very useful if you are performing mass or automated installations.

| Tip |

|---|---|

Sometimes (due to a bug in a specific package's debconf interface), you won't be able to successfuly configure the package; this is very likely to happen from time to time if you use the Debian unstable tree. Common example would be 'Accept' buttons which don't actually accept any input, or text fields which are (again, by mistake) always considered empty. A possible hack solution for this kind of problem is to reconfigure debconf to non-interactive, then configure the problematic package and finally reconfigure back to some sort of interactive mode. Packages have matured over the years though, and I couldn't remember any relevant occurrence of this problem. |

| Tip |

|---|---|

You will most probably be using this command to reconfigure the X Window System every now and then, so just remember this command, which is the elegant Debian-specific way to deal with the configuration: dpkg-reconfigure xserver-xorg |

Sometimes it's also useful to see a recursive dependency listing for a package. This feature is provided by the apt-rdepends package.

To reinstall a package, use either the above dpkg -i or apt-get --reinstall install PACKAGE NAMES. To install the specific version or branch of a package, run apt-get install vim=6.0.093-1 or apt-get install vim/testing.

To upgrade the system, you usually run apt-get update; apt-get upgrade. To upgrade only specific packages, run apt-get install PACKAGE NAMES or debfoster -u PACKAGE NAMES.

grep-dctrl is another tool in the stash. It can answer questions such as "What is the Debian package foo?", "Which version of the Debian package vim is now current?", "Which Debian packages does John Doe maintain?", "Which Debian packages are somehow related to the Scheme programming language?", and "Who maintains the essential packages of a Debian system?". See its manual page for more information.

To remove unnecessary Debian packages (unused libraries left on the system etc.) from your system, run debfoster or deborphan.

dpkg-repack package provides us with a tool to bundle installed packages back into the .deb format. If any changes have been made to the package while it was unpacked (such as files in /etc modified), the new package would, of course, inherit the changes. This utility makes it easy to copy packages from one computer to another, or to recreate packages that are installed on your system, but no longer available elsewhere.

Use dpkg-divert to override a package's version of a specific file. You could use it to override some package's configuration file, or whenever some files (which aren't marked as 'conffiles' in the Debian package) need to be preserved by dpkg when installing a newer version of the package. In addition to (or instead of) dpkg-divert, you can use dpkg-statoverride to override ownership and permissions (and suid bits, of course) of installed files. Using this technique, you could also allow program execution only to a restricted user group.

Debian package files format

Even though Debian GNU packages are best manipulated using appropriate Debian package tools, it's quite useful to be introduced to their internal "constitution".

Debian package files (.deb files) need no special tools to be manipulated; they are simple ar archives consisting of two files: data.tar.gz and control.tar.gz. In other words, the generic tools needed to extract .deb contents are ar, tar and gzip, and are all present on just about every Unix system.

To extract package data, run dpkg -x package.deb /tmp/PACKAGE. To extract package control section, run dpkg -e package.deb.

If you have no dpkg at your disposal, you can extract the data section using ar to extract the data tarball, and tar/gzip to unpack it: ar x package.deb data.tar.gz; tar zxf data.tar.gz. The same way, you can extract the control.tar.gz section.

| Please Note: | |

|---|---|---|

You might need to use the above procedure in practice if, while upgrading gnu libc package, you do something silly and end up in half-installed state with no /sbin/ldconfig command (so all of the "heavier" programs start refusing to run). If that's why you are reading this, then one solution is to unpack the libc6 package manually and copy the ldconfig command back in place (to /sbin/). The other (and easier) thing you can do is temporarily create an empty /sbin/ldconfig file which would simply return success:

|

Useful extra packages

Two useful programs worth mentioning are vrms and popularity-contest. vrms notifies you of non-free packages installed on your system (ideally, there should be none!). popularity-contest produces weekly package usage statistics (frequency of use, etc.) and anonymously e-mails them to Debian, thus automating part of the feedback from the user base. The statistics are, for example, used to decide on the distribution of Debian GNU packages on CD-Roms.

Monitoring installed files for correctness

Each Debian package inserts control information into the package database (/var/lib/dpkg/ directory). One of the values are MD5 sums of all installed files (File "sums", "MAC"s or "digests" are results of a one-way function — MD5 in our case — and uniquely identify file contents). When the file digest is compared to a previous good value from the database, we can immediately notice if the file contents (and contents alone, not other attributes like mode or ownership) have been changed, either as a consequence of legal system operation, software/hardware bug, or a successful break-in).

For packages that do not have MD5 sums already generated (there are few cases), the sums can be generated directly at your site, during installation. (Debconf will present you with an appropriate question when you install debsums.)

Most common use is to run debsums PACKAGE to verify individual package, or debsums -s to verify all packages and only display checksum mismatches. See debsums man page for more information on available options and possible use.

If you wish to change a file's checksum, you no longer need to develop your own tools to edit /var/lib/dpkg/info/PACKAGE.md5sums files, newer debsums packages ship with the debsums_gen command.

Also, two programs worth mentioning are changetrack and etckeeper. Etckeeper may be a bit more advanced, and it is used to put your whole /etc directory under revision control. To install and initialize it, run sudo aptitude install etckeeper; etckeeper init; cd /etc && git commit -am Initial. After that, you can see pending changes in /etc by cd-ing into it and running git status or git diff at any time, and you can see previous, committed changes by running git log or git log -p. You can override pending changes to any file with the last committed version with git checkout FILENAME.

Shutting down the system

Recall that we have mentioned and briefly explained runlevels above. In Unix, system halt (shutdown) is simply runlevel 0, system reboot is runlevel 6.

To shut down the machine, any of shutdown -h now, halt, poweroff or init 0 will do. To reboot the system, shutdown -r now, reboot or init 6 are okay. Additionally, you can also use Ctrl+Alt+Del (in the console) to reboot, and this behavior is controlled by the /etc/inittab file (run init q to reload the file if you change it). Sometimes you want to cancel the ongoing shutdown; you can do it as long as your console is active. In that case, run shutdown -c or say, init 2.

Chapter 4. Interaction with system hardware

"In the UNIX world, people tend to interpret `non-technical user' as meaning someone who's only ever written one device driver." | ||

| --Daniel Pead | ||

Introduction

There are many computer architectures available. Most of them have a cleaner design and stronger characteristics than the nowadays-standard Intel-compatible Personal Computer, and a number of them were in existence before the PC was "invented". Today, though, we see that the PC-compatible processor series has taken over the workstation and server market.

On a side note, as the quote from the "Autodesk file" by John Walker in the 80s, about the PC predecessor would say:

Coming to Terms with the 8086

It's become clear that the plague called the 8086 architecture has sufficiently entrenched itself that it's not going to go away. For the last month or more, Mike Riddle, John Walker, Keith Marcelius, and Greg Lutz have been bashing their collective heads against it. The following is collected information on this unfortunate machine.

I think we'd be wise to diffuse our 8086 knowledge among as many people as possible. The main reference for the 8086 is a book called, imaginatively enough, The 8086 Book published by Osborne. This is the architecture and instruction set reference, but does not give sufficient information to write assembly code (of which, more later). However, it is the starting point to understand the machine. AI will reimburse the cost of your buying this book, which is available at computer and electronic stores.

I have never encountered a machine so hard to understand, one where the most basic decisions in designing a program are made so unnecessarily difficult, where the memory architecture seems deliberately designed to obstruct the programmer, where the instruction set seems contrived to induce the maximum confusion, and where the assembler is so bizarre and baroque that once you've decided what bits you want in memory you can't figure out how to get the assembler to put them there.

This list of other general-purpose architectures (besides the Intel-compatible) would include Motorola 68000 (m68k), Sun Microsystems Sparc (sparc), Digital Equipment Alpha (alpha), Motorola/IBM PowerPC (ppc or powerpc), Silicon Graphics/DEC MIPS (mips and mipsel), Intel IA-64 (ia64) and AMD 64 (amd64). This list is by no means complete, it's only a random selection of architectures already supported by Debian GNU.

Nowadays, if you want to use high-end non-PC architectures, your best bet are IBM Power9 workstations available from Raptor Computing, and known as architecture "ppc64le".

So, given different architectures which are not binary-compatible (that is, where programs are not compatible across architecture bounds), how do you get the same software running on all of them? We are, of course, interested in re-using existing applications — those that have a tradition, more features, and more hours in production than anything we would be able to create ourselves. The key to the problem is namely porting; changing existing code base in a way that it makes provisions for the new target platform (at places where any specific handling is necessary). Given proper education, writing portable software is easy; porting ("behaving" existing software), however, can be a source of great despair — sometimes you first have to deal with unacceptably poor programming practices and missed design, before you even come to portability issues.